Breaking Down 0G’s Four-Layer Architecture: How Chain, Storage, DA, and Compute Power On-Chain AI

As AI agents, generative AI, and on-chain intelligent applications continue to advance, traditional blockchains are increasingly unable to meet the demands of high-frequency computation and large-scale data processing. Blockchains were originally built to support transactions and asset transfers, but in AI-driven scenarios, compute-intensive inference and continuous data access have become the dominant workloads.

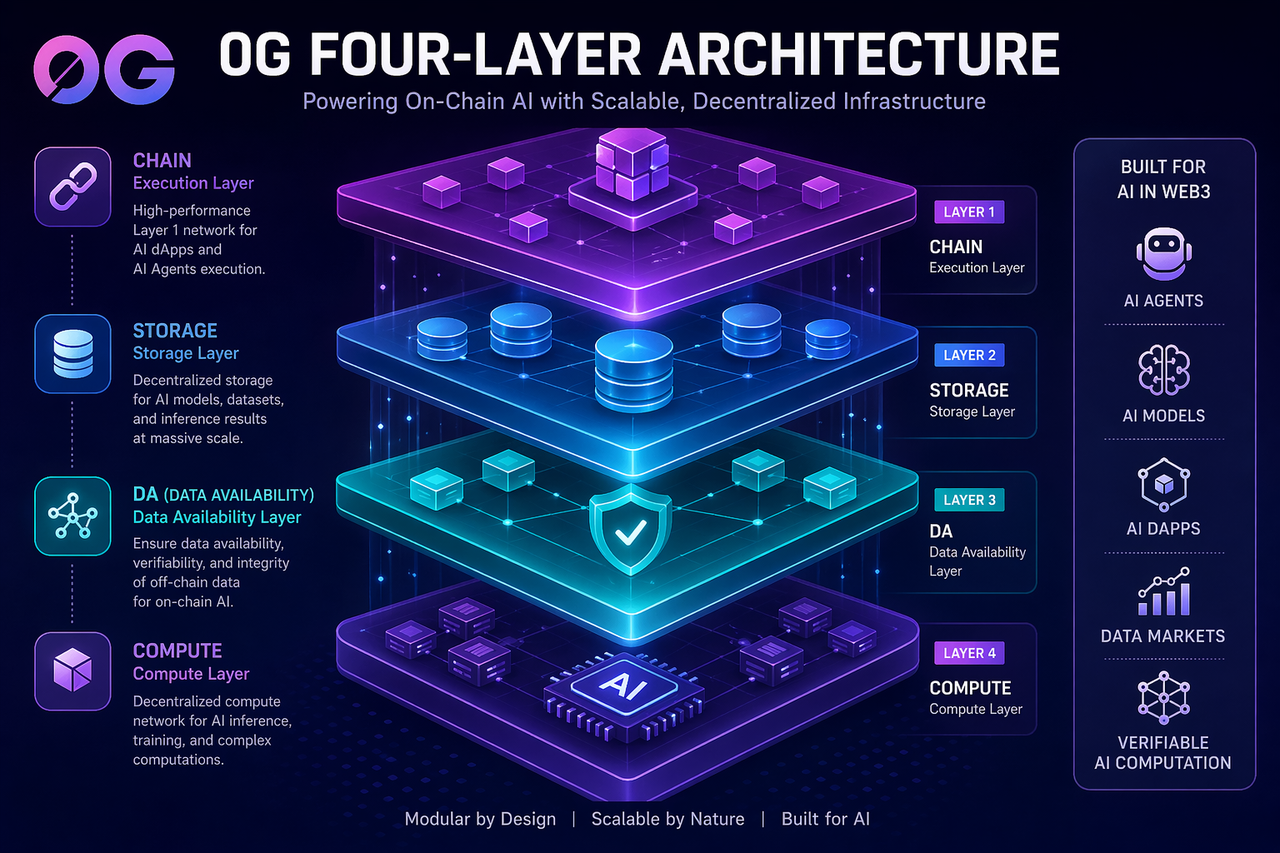

Against this backdrop, 0G introduces an AI-oriented infrastructure design. Through a modular four-layer architecture, it provides a scalable runtime environment for on-chain AI, enabling blockchains to evolve from “transaction execution networks” into “AI compute infrastructure.”

0G’s Position in the AI Infrastructure Stack

0G is not a general-purpose blockchain in the traditional sense. Instead, it is a Layer 1 infrastructure network purpose-built for AI applications.

Its primary objective is to support AI agents and enable the deployment of on-chain AI applications, allowing developers to build AI systems without relying on centralized cloud providers.

Within the broader AI plus Web3 landscape, 0G sits firmly at the infrastructure layer rather than the application or single-protocol layer, giving it greater architectural flexibility and long-term scalability.

The Design Logic Behind 0G’s Four-Layer Architecture

0G’s system is composed of four core modules, Chain, Storage, Data Availability (DA), and Compute. These components do not operate in isolation. Instead, they form a complete execution pipeline for AI workloads.

The Chain layer handles execution and state management, acting as the logic layer for AI applications. Storage is responsible for data persistence, supporting AI models and training datasets. The DA layer ensures that off-chain data remains verifiable and accessible. Compute provides the distributed processing power required for AI inference and complex tasks.

At its core, this design decomposes the traditional monolithic blockchain into specialized modules, allowing each layer to be optimized for AI-specific demands.

Chain (Execution Layer): The Operational Core of AI Applications

Within the 0G architecture, the Chain layer processes all on-chain logic, including AI agent interactions, state updates, and application calls.

Unlike traditional blockchains that focus primarily on transaction throughput, 0G Chain is optimized for high-frequency interactions typical of AI systems, enabling continuous operation of intelligent applications.

Storage (Data Layer): The Foundation for AI Data

The Storage layer manages AI-related data, including model parameters, training datasets, and inference outputs.

Because AI applications require significantly more data than standard blockchain use cases, this layer plays a critical role in scalability. It offers cost-efficient storage and supports long-term data persistence, allowing AI models to evolve over time within a decentralized environment.

DA (Data Availability Layer): Ensuring Trust in On-Chain AI

The Data Availability layer ensures that off-chain data can be verified and accessed at any time, providing a foundation for transparency and trust in AI computations.

When AI agents execute tasks autonomously, the DA layer guarantees data integrity, making outputs verifiable. This is especially important for decentralized AI systems where trust cannot rely on centralized intermediaries.

Compute (Computation Layer): The Engine of AI Execution

The Compute layer provides decentralized computational resources and is one of the most critical components of the 0G architecture.

It supports AI model inference, complex computations, and distributed workloads. Unlike traditional blockchains that handle only lightweight operations, this layer enables 0G to run meaningful AI workloads at scale.

How Do the Four Layers Work Together to Power On-Chain AI?

The true value of 0G lies not in any single module, but in how these four layers interact.

The Chain layer provides execution logic, Storage supplies the data foundation, DA ensures data integrity and availability, and Compute delivers the processing power. Together, they form a complete execution loop that allows AI agents to operate continuously in a decentralized environment.

This architecture effectively transforms blockchain from a “ledger system” into an “AI computation system,” capable of supporting complex intelligent applications.

Why Does AI Require This Type of Architecture?

AI applications differ fundamentally from traditional blockchain use cases. Their core challenges revolve around computational intensity, heavy data dependence, and the need for verifiable results.

Traditional Layer 1 networks are optimized for transaction processing, but AI applications require ongoing inference and large-scale data access. A single execution layer is no longer sufficient.

By modularizing these capabilities, 0G allows each layer to specialize, significantly improving overall system efficiency and scalability.

Industry Implications of 0G’s Architecture

As AI and Web3 continue to converge, infrastructure is shifting from general-purpose blockchains toward specialized AI networks.

0G’s four-layer design represents a new paradigm, moving from transaction-first to compute-first architecture. This shift enables blockchains to serve as foundational infrastructure for AI applications rather than just financial systems.

In the future, on-chain systems may evolve beyond asset networks into core computational layers for AI.

Conclusion

Through its modular four-layer architecture, Chain, Storage, Data Availability, and Compute, 0G establishes a decentralized infrastructure network tailored for AI applications.

This design enables AI agents and on-chain AI applications to operate efficiently in decentralized environments, optimizing performance, data handling, and computational capabilities. As a result, it contributes to the broader evolution of AI-focused Layer 1 infrastructure.

FAQs

What is 0G’s four-layer architecture?

It consists of Chain, Storage, Data Availability (DA), and Compute, which together support the execution of on-chain AI applications.

Why does 0G use a modular design?

Because AI applications require high computation, large-scale storage, and strong verifiability, modular architecture improves scalability and efficiency.

What is the role of the DA layer in 0G?

The DA layer ensures that data is verifiable and accessible, forming the foundation for trusted AI computation.

Why is the Compute layer important?

It provides decentralized AI computing power and supports model inference and complex task execution.

How is 0G different from traditional blockchains?

Traditional blockchains focus on transaction processing, while 0G is optimized for AI workloads, making it better suited for compute-intensive applications.

Related Articles

The Future of Cross-Chain Bridges: Full-Chain Interoperability Becomes Inevitable, Liquidity Bridges Will Decline

Solana Need L2s And Appchains?

Sui: How are users leveraging its speed, security, & scalability?

Navigating the Zero Knowledge Landscape

What is Tronscan and How Can You Use it in 2025?