AI Token Economy Is Taking Shape: NVIDIA CEO Jensen Huang Reveals Why Compute Is the “New Electricity”

In a recent in-depth interview, Jensen Huang delivered a powerful insight: computing is shifting from a “cost” to a “product” that directly creates value.

While this concept may seem abstract, it fundamentally addresses a larger question—what is the core factor of production in the AI era?

As NVIDIA’s founder, Huang’s vantage point is distinct. He isn’t discussing user growth at the application layer or parameter scale at the model layer. Instead, he’s redefining something at the foundation: whether computing itself is becoming a tradable economic unit.

From Cost Center to Production Engine

Looking back to the internet era, data centers had a singular role.

They stored data, processed requests, and supported applications—essentially functioning as part of a company’s costs. Whether cloud computing or SaaS, the focus was on “optimizing cost structure,” not directly generating sellable output.

AI changed this equation. As models began generating text, images, code, and executing complex tasks, each computation became more than resource consumption—it became “producing results.” These results can be consumed by users or directly priced.

Thus, data centers are no longer just cost centers; they now operate like factories. Their inputs are electricity, chips, and models; their outputs are content, decisions, and even automated actions. All these outputs are unified under one concept—Token.

Token: The Smallest Unit of Production in the AI Era

Here, Token refers not to cryptocurrency tokens, but to the basic unit of measurement in AI systems. When you ask a model a question, you consume Tokens; when the model generates an answer, it is “producing” Tokens. API pricing is fundamentally based on Token usage.

This may sound like a technical detail, but the real change is: for the first time, computation can be precisely measured, priced, and traded as units.

Historically, this is a critical milestone. In the industrial era, electricity became infrastructure because it could be measured (kilowatt-hours); in the internet era, bandwidth and storage became commercialized because they could be billed.

Now, AI has turned “intelligence” itself into a measurable resource. Token is not just a technical concept—it’s emerging as a new “economic unit.”

Why Computing Power Will Be Consumed Like Electricity

Huang made an aggressive prediction in the interview: in the future, spending on computation may account for a much larger share of the economy.

The logic behind this parallels the development of electricity.

When electricity first appeared, it was simply part of industrial costs. But as electrification spread, nearly every industry became dependent on electricity, which ultimately became an indispensable foundational resource.

AI may be following the same path. As more tasks are handled by AI—writing, coding, designing, analyzing, decision-making—the essence behind these activities is the consumption of computing power and Tokens.

This creates a new consumption structure:

-

Enterprises are no longer simply buying software; they are “purchasing intelligence”

-

Users are no longer just using tools; they are “consuming computation”

-

Economic activity is increasingly revolving around computing power

This is the concept of “computing power as electricity.”

The Real Turning Point: Inference Costs Are Exploding

Many people view AI costs as “training models,” but Huang repeatedly emphasized a shift in this interview: inference is becoming the primary cost driver. Early AI was more like a passive tool—you asked, it answered, and computation was discrete. Now, AI is evolving into a continuously operating system. Especially with the rise of Agents, the situation has changed dramatically:

-

A task is no longer a single call, but multiple rounds of inference

-

A system can run multiple AIs simultaneously

-

AI can autonomously call other AI

This means computation has shifted from “per-use consumption” to “continuous burning.” Huang’s statement was direct: “Thinking is expensive.”

Once AI starts “thinking,” demand for computing power doesn’t grow linearly—it grows exponentially.

From User Growth to Agent Growth

If the internet era’s growth logic was “number of users,” then in the AI era, it may become “number of Agents.” This is an easily overlooked but crucial shift. Users are finite, but Agents can be replicated.

An AI assistant can serve multiple tasks simultaneously; a system can run thousands of Agents at once; an Agent can even generate new Agents. This leads to a new growth model: demand for computing power is no longer determined by people, but by the “number of machines.” And machine growth has no natural ceiling.

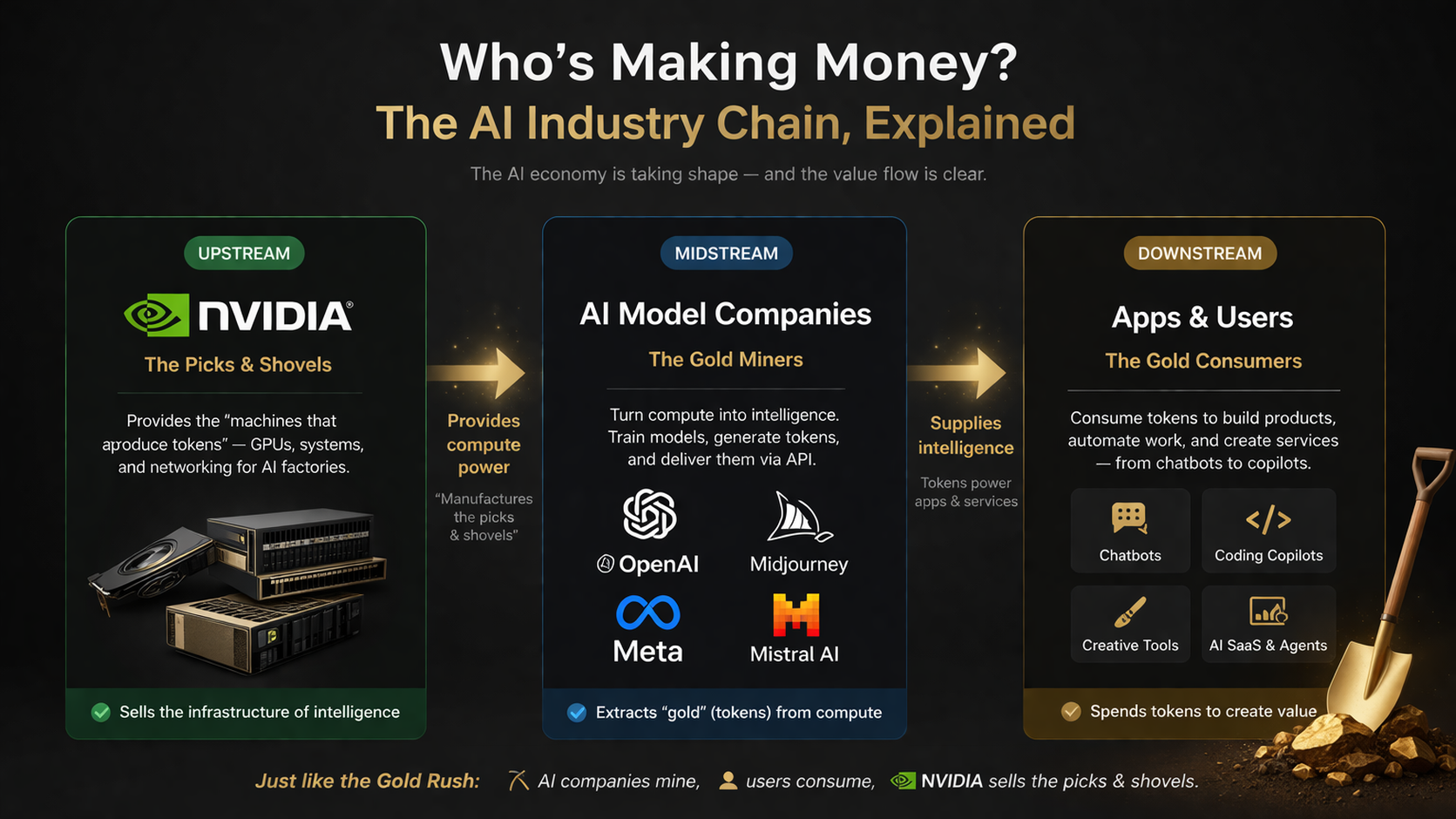

Who Profits? A Clear Industry Chain

Within this structure, the entire AI industry chain is very clear.

At one end are model companies, which convert computing power into Tokens and provide them to users. At the other end is the application layer, responsible for consuming these Tokens and building products and services. Upstream are companies like NVIDIA, which supply the “machines that produce Tokens.”

This setup is reminiscent of the gold rush era:

-

AI companies are “mining gold”

-

Users are “consuming gold”

-

NVIDIA is “selling shovels”

As long as there is demand for “gold,” selling shovels will remain a strong business.

The Overlooked Variable: Energy, Not Chips

Many believe the bottleneck for AI is chips, but Huang offered a more interesting perspective in this interview: the real constraint may be energy.

However, his view isn’t that “electricity is insufficient,” but that “utilization is inefficient.”

Traditional power grids are designed for extreme peak loads and are idle much of the time. AI data centers have an advantage—they can adjust dynamically.

For example, they can reduce performance, delay tasks, or shift workloads, all without impacting the overall system. This means computing power systems may be more flexible than power systems. Such flexibility will become a key factor in future competition.

A Shift Closer to an Industrial Revolution

Piecing these clues together reveals a broader vision.

-

Token turns computation into a commodity

-

AI factories give data centers production attributes

-

Inference costs drive continuous consumption of computing power

-

Agents expand demand without limit

These changes combine to create not just a technical upgrade, but a reconstruction of the production model. If the internet transformed information flow, AI is transforming the “production process itself.” That’s why Huang uses language reminiscent of industrialization to describe AI.

Because in his definition, AI is not just software—it is a new production system.

Conclusion

When computation can be measured, priced, and traded; when data centers operate like factories, continuously delivering value; when computing power is consumed like electricity—all of this points in one direction: AI is evolving from a tool into infrastructure. Once a technology becomes infrastructure, the resulting changes are not incremental, but structural.

From this perspective, the true significance of the interview may not be in predicting the future, but in offering a judgment: we may already be at the starting point of “AI industrialization.”

Related Articles

Arweave: Capturing Market Opportunity with AO Computer

The Upcoming AO Token: Potentially the Ultimate Solution for On-Chain AI Agents

What is AIXBT by Virtuals? All You Need to Know About AIXBT

AI Agents in DeFi: Redefining Crypto as We Know It

AI+Crypto Landscape Explained: 7 Major Tracks & Over 60+ Projects